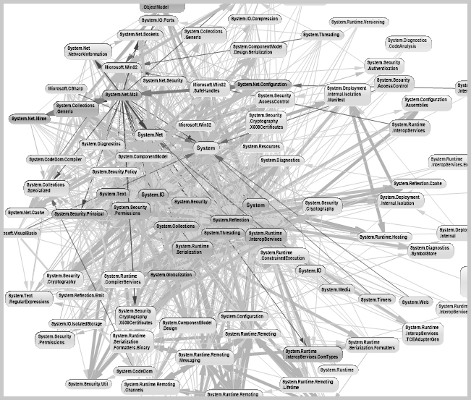

Tight couplings, cyclic dependencies and not well separated concerns are the main attributes, which defines a Big Ball of Mud architecture. Those kind of monolithics systems are very difficult to maintain. Maintainance contains testability, interchangeability, extensibility, deployability, scaleability and comprehensibility. Big Ball of Mud can occur on all system levels such relationships between Application-To-Application, Feature-To-Feature, Component-To-Component or Class-To-Class, but also on organization level such as Team-To-Team relationships.

Reasons for that can be "Beginning without design", "Obscurity of requirements" or "Ignoring software entropy". Big Ball of Mud compromise the long-term success, the entire system is difficult to maintain and a quick Time-To-Market property is not present. This often leads to a rewrite, the hell for all stakeholders. It is appropriate to create an Emergent Design, which is easy to maintain and react flexible to changes.

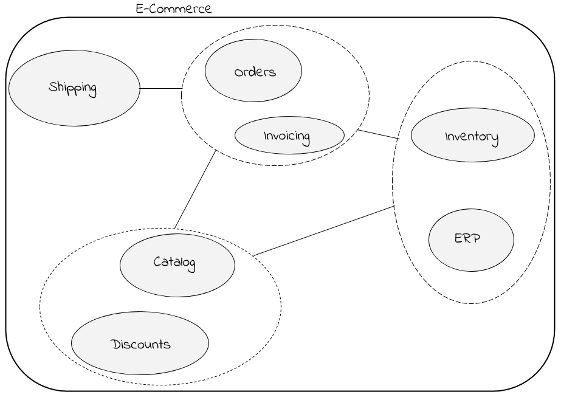

Context Map

Before starting implementation, working out a plan gives chances of success in a long term. Not detailed for each feature like in a waterfall process, but a rough one which can be followed in iterations. All functional requirements with their engagements to each other are often very complex. To reduce the complexity, they have to be aggregated to different domains inside a Context Map.

Context Mapping is important and should be the first step to an Emergent Design. It helps everybody to keep the big picture and is essential for making strategic decisions like...

- Which feature belongs to which domain?

- Is a distributed system required?

- Can we bound domains to a subsystem?

- How many teams are needed to support all domains and how to organize them?

According to Conway's Law, it's very important that the team organization reflects the planned architecture, otherwise the architecture is driven by the organization.

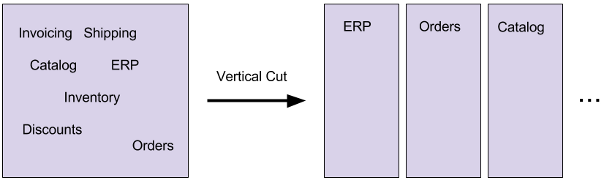

Based on the Context Map the entire system is sliced to their domains (vertical cut). From now on it's possible to implement the domains in software modules (packages), which reduces the overall complexity a lot. Modules are highly cohesiv and loose coupled to each other. That means, a possible change request affects only one module and is a big benefit for the maintainance.

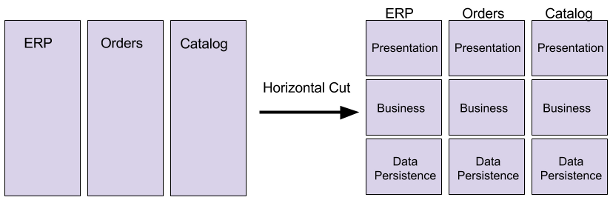

Modularization

Each module will be implemented in iterations, and besides the functional requirements they have non-functional or rather technical requirements. Inside a planning technical decisions are made. Generally this is a risky approach, because it depends on current requirements. Decisions should not be made to meet unknown changes too, but they should be interchangable easily. Hence a module have to be layered to their technical aspects (horizontal cut) such as Presentation, Business and Data Persistence.

One fundamental rule is, that the module is only accessible through the presentation layer. Another fundamental rule is, that the layers depends only from top to down. Modules often depend on vendors (third party libraries) such as an ORM-Framework. To enable interchangability for vendors as well, they should be abstracted based on the Dependency Inversion Principle. A layered module improves testability due to the fact that each layer is independent. Same is true for interchangability, for instance a storage medium is substitutable easily, because it's not enmeshed into other layers. But the most important gain is, that the business layer is isolated, which improves the comprehensibility a lot.

So layering is a big benefit to tackle complexity and improving maintainability.

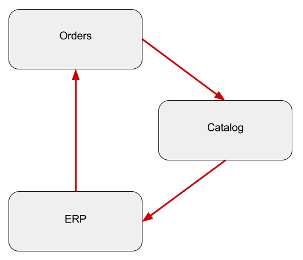

Dependencies

Modules have to communicate to each other and dependencies appears. It doesn't matter, whether the modules are distributed to several applications or composed to one application. In general dependencies are bad, changes on one side affects the other side. A popular idea for decoupling is a message-based system here. A module send a single-purpose event to a dispatcher, where a registered listener can delegate a new command to another module. This enables also an Asynchronous Architecture used to produce highly scalable applications and Aspect-Oriented Programming for decoupling Cross-Cutting Concerns.

Dependencies can exist on all system levels such as technical as well as organizational level. Important is, that the amount of dependencies must be at a minimum and acyclic. Circular dependencies can cause many unwanted side-effects and hinders seperated reusability.

Final Thought

Slicing the software system vertically and horizontally in small pieces in the right way is difficult. There are many architecture patterns, and prefering one depends on the use case. But the idea is always the same: Separation of Concerns.

Additionally in regard to market changes, the requirements are often changed, so the entire system is affected by Software Entropy. This leads to technical debts and have to be tackled continuously to avoid falling back to Big Ball of Mud structures. Therefor Emergent Design requires a closed collaboration between skilled developer and skilled stakeholder, a proactive dependency management, a high test coverage, relapsing analysis about code and context and an open mindset for refactoring. Having an Emergent Design is fundamental for agile processes, supports a quick Time-To-Market and improves all aspects of maintainability.